2007/12/12

Shindig!

2007/12/09

Singularity to Launch from Adult Chat Room

2007/12/05

OpenID 2.0 Released!

2007/12/04

OAuth 1.0 Core Released!

December 4, 2007 – The OAuth Working Group is pleased toannounce publication of the OAuth Core 1.0 Specification. OAuth (pronounced"Oh-Auth"), summarized as "your valet key for the web," enables developers ofweb-enabled software to integrate with web services on behalf of a user withoutrequiring the user to share private credentials, such as passwords, betweensites. The specification can be found at http://oauth.net/core/1.0and supporting resources can be found at http://oauth.net.

IIW2007b Updates

Next session, Joseph Smarr of Plaxo, OpenID user experience. Good walkthrough of UI issues. Note that with directed identity in OpenID 2.0, can simply ask to log in a user given their service. Notes here. Using an email address is a possibility as well; clicking on a recognizable icon (AIM) to kick of an authentication process is probably the most usable path right now.

Session: OAuth Extensions; notes here.

Session: OAuth + OpenID. Use case: I have an AOL OpenID. I go to Plaxo and am offered to (1) create an account using my AOL OpenID and (2) pull in my AOL addressbook, all in one step.

Proposal: I log in via OpenID and pass in an attribute request asking for an OAuth token giving appropriate access, which lets AOL optimize the permissions page (to one page, or organize all data together). Then get token, and use token to retrieve data.

2007/11/30

Internet Identity Workshop 2007b

Internet Identity Workship 2007b

OpenID Commenting for Blogger!

What's particularly fun about this is that it's been a very collaborative project, bringing together Blogger engineers, 20% time from a couple of non-Blogger engineers, and last but not least some of the fine open source libraries provided by the OpenID community. Thanks all!

Tags: openid

OpenID Commenting for Blogger!

What's particularly fun about this is that it's been a very collaborative project, bringing together Blogger engineers, 20% time from a couple of non-Blogger engineers, and last but not least some of the fine open source libraries provided by the OpenID community. Thanks all!

2007/11/09

Essential Atom and AtomPub in 30 seconds

AtomPub is how to discover, create, delete, and edit those things.

Everything else is optional and/or extensions.

2007/11/02

OpenSocial Ecosystem

Obviously this is just a first step. We're all trying to build a self-sustaining ecosystem, and right now we're bootstrapping. It's a bit like terraforming: We just launched the equivalent of space ships carrying algae :).

A key next step is making it easy to create social app containers. It's not hard to build a web page that can contain Gadgets, though it could be easier. Adding the social APIs, the personal data stores, social identity, and authentication and authorization makes things a lot more complex. This is the part I'm working on, along with a lot of other people. It's a problem space I've been working in for a while on the side. Now it's time to achieve 'rough consensus and running code.'

2007/10/25

Blogger #1 "Social Networking" Site Worldwide

According to them, Blogger did140,000,000 worldwide unique visitors in September, and has been on a tear since June. Nice! And to all those Blogger users, thank you!

Of course, whether Blogger is a "Social Networking" site depends on your definitions; Dare wants to disqualify the front runner. Me? I think 140 million people can speak for themselves.

2007/10/23

Fireblog

2007/10/22

A Four Year Mission... to Boldly Go Where No Protocol has Gone Before

The Atom Publishing Format and Protocol WG (atompub) in the Application Area has concluded.

...

The AtomPub WG was chartered to work on two items: the syndication format in RFC4287, and the publishing protocol in RFC5023. Implementations of these specs have been shown to work together and interoperate well to support publishing and syndication of text content and media resources.

Since both documents are now Proposed Standards, the WG has completed its charter and therefore closes as a WG. A mailing list will remain open for further discussion.

Congratulations and thanks to the chairs Tim Bray and Paul Hoffman, to the editors of the two WG RFCs (Mark Nottingham, Robert Sayre, Bill de Hora and Joe Gregorio), and to the many contributors and implementors.

2007/10/14

Widget Summit

2007/10/08

The Atom Publishing Protocol is here!

2007/09/21

OAuth: Your valet key for the Web

Just published at http://oauth.net/documentation/spec: Draft 1 of the OAuth specification. As my day job allows, I've been contributing to the OAuth working group. We'd love feedback.

Just published at http://oauth.net/documentation/spec: Draft 1 of the OAuth specification. As my day job allows, I've been contributing to the OAuth working group. We'd love feedback.What is it?

OAuth is like a valet key for all your web services. A valet key lets you give a valet the ability to park your car, but not the ability to get into the trunk or drive more than 2 miles or redline the RPMs on your high end German automobile. In the same way, an OAuth key lets you give a web agent the ability to check your web mail but NOT the ability to pretend to be you and send mail to everybody in your address book.

Today, basically there are two ways to let a web agent check your mail. You give it your username and password, or it uses a variety of special-purpose proprietary APIs like AuthSub, BBAuth, OpenAuth, Flickr Auth, etc. to send you over to the web mail site and get your permission, then come back. Except that since mostly they don't implement the proprietary APIs, and just demand your username and password. So you sigh and give it to them, and hope they don't rev the engine too hard or spam all your friends. We hope OAuth will change that.

OAuth consolidates all of those existing APIs into a single common standard that everybody can write code to. It explicitly does not standardize the authentication step, meaning that it will work fine with current authentication schemes, Infocard, OpenID, retinal scans, or anything else.

And yes, it will work for AtomPub and other REST services, and I hope it will be the very last authorization protocol your client ever needs to add for those things.

For more information and ongoing updates, go to http://oauth.net/.

Note: I picked up the "valet key" metaphor from Eran's postings. Thanks Eran!

2007/09/16

"We have lost control of the apparatus" -- Raganwald

Our users are being exposed to applications we don’tcontrol. And it messes things up. You see, the users get exposed toother ways of doing things, ways that are more convenient for users,ways that make them more productive, and they incorrectly think weought to do things that way for them.I sure hope this part is true:

You would things couldn’t get any worse. But they areworse, muchworse. I’ll just say one word. Google. Those bastards are practicallythe home page of the Internet. Which means, to a close approximation,they are the most popular application in the world.

And what have they taught our users? Full-text search wins. Please,don’t lecture me, we had this discussion way back when we talked aboutfields. Users know how to use Google. If you give them a search pagewith a field for searching the account number and a field for searchingthe SSN and a field for searching the zip code and a field forsearching the phone number, they want to know why they can’t just type4165558734 and find Reg by phone number? (And right after we make thatwork for them, those greedy and ungrateful sods’ll want to type (416)555-8734 and have it work too. Bastards.)

2007/09/14

2007/08/16

Do you trust your friends with your URLs?

Please correct me if I’m wrong about this; I want to be wrong aboutthis. Or I want to learn that Facebook has already considered and dealtwith the issue and it’s just not readily apparent to me. But I’mthinking that Facebook’s feeds for Status Updates, Notes, and PostedItems must in many instances be at odds with privacy settings thatattempt to limit users’ Facebook activities to “friends only” (or areeven more restrictive).

Denise is both right and wrong. The basic issue is that once you give out a feed URL (which is not guessable) to a friend, they can then give it out to their friends and their friends... ad infinitum. These people can then get your ongoing updates, without you explicitly adding them.

Of course, this requires your friends to breach the trust you placed in them to guard your bits. Notice that even without feeds, your friends can easily copy and paste your bits and send them on manually. It's a simple matter to automate this if a friend really wants to broadcast your private data to whoever they want. So as soon as you open up your data, you are vulnerable to this. To prevent it you'd need working DRM; not a good path to go down.

It would be possible to access control the feeds; there's even a nascent standard (OAuth) for doing this in a secure and standards compliant way. But even this doesn't prevent your friends from copying your bits.

A much simpler approach is to hand out a different URL for each friend. They're still obfuscated of course. You can then block a friend (and anyone they've shared the URL with) from seeing future updates at any time. This is about the best that can be done. Update: This is apparently exactly what Facebook has done. Denise is still concerned that friends could accidentally or purposefully re-share the data, since the feed format makes it easy to do so.

Facebook's messaging could definitely be improved. Suggestions?

2007/08/07

RESTful partial updates: PATCH+Ranges

This works, but it doesn't scale well to large resources or highupdate rates, where "large" and "high" are relative to your budget forbandwidth and tolerance for latency. It also means that you can'tsimply and safely say "change field X, overwriting whatever is there,but leave everything else as-is".

I've seen the same thought process recapitulated a few times now on howtosolve this problem in a RESTful way. The first thing that springs tomind is to ask if PUT can be used to send just the part you want tochange. This can be made to work but has some major problemsthat make it a poor general choice.

- A PUT to a resourcegenerally means "replace", not "update", so it's semanticallysurprising.

- In theory it could break write-through caches. (This is probablyequivalent to endangering unicorns.)

- It doesn'twork for deleting optional fields or updating flexible lists such asAtomcategories.

A good solution to the partial update problem would be efficient,address the canonical scenarioabove, be applicable to a wide range of cases, not conflict with HTTP,extend basic HTTP as little as possible, deal with optimisticconcurrency control, and deal with the lost update problem. The methodshould be discoverable (clients should be able to tell if a serversupports the method before trying it). It would also be nice if thesolution would let us treat data symmetrically, both getting andputting sub-parts of resources as needed and using the same syntax.

There are three contenders for a general solution pattern:

Expose Parts as Resources. PUT to a sub-resourcerepresents aresources' sub-elements with their own URIs. This is in spirit whatWeb3Sdoes. However, it pushes the complexity elsewhere: Intodiscovering the URIs of sub-elements, and into how ETags work acrosstwo resources that are internally related. Web3S appears to handleonlyhierarchical sub-resources, not slicing or arbitrary selections.

Accept Ranges on PUTs. Ranged PUT leverages andextends theexisting HTTP Content-Range:header to allow a client tospecify a sub-part of a resource, not necessarily just byte ranges buteven things like XPath expressions. Ranges are well understood in thecase of GET but were rejected as problematic for PUT a while back bytheHTTP working group. The biggest concern was that it adds a problematicmust-understand requirement. If a server or intermediary accepts a PUTbut doesn'tunderstand that it's just for a sub-range of the target resource, itcould destroy data. But, thisdoes allow for symmetry in reading andwriting. As an aside, the HTTP spec appears to contradict itselfabout whether range headers are extensible or are restricted to justbyte ranges. This method works fine with ETags; additional methods fordiscovery need to be specified but could be done easily.

Use PATCH. PATCH is a method that's beentalked about for awhilebut is the subject of some controversy. James Snell has revived LisaDusseault's draft PATCH RFC[6] and updated it, and he's looking forcomments on the new version. I think this is a pretty good approachwith a few caveats. The PATCH method may not be supported byintermediaries, but if it fails it does fail safely. It requires a newverb, which is slightly painful. It allows for variety of patchingmethods via MIME types. It's unfortunately asymmetric in that it doesnot address the retrieval ofsub-resources. It works fine with ETags. It's discoverable via HTTPheaders (OPTIONS and Allow: PATCH).

The biggest issue with PATCH is the new verb. It's possible thatintermediaries may fail to support it, or actively block it. This isnot too bad, since PATCH is just an optimization -- if you can't useit, you can fall back to PUT. Or use https, which effectively tunnelsthrough most intermediaries.

On balance, I like PATCH. The controversy over the alternatives seemto justify the new verb. It solves the problem and I'd be happy withit. I would like there to be a couple of default delta formats definedwith the RFC.

The only thing missing is symmetricalretrieval/update. But, there's an interesting coda: PATCH is definedso that Content-Range is must-understand on PATCH[6]:

So let's say aserver wanted to be symmetric; it could advertise support forXPath-based ranges on bothGET and PATCH. A client would use PATCH with a range to send backexactly the same data structure it retrievedearlier with GET. An example:The server MUST NOT ignore any Content-* (e.g. Content-Range)

headers that it does not understand or implement and MUST return

a 501 (Not Implemented) response in such cases.

which retrieves the XML:GET /abook.xmlRange: xpath=/contacts/contact[name="Joe"]/work_phone

<contacts><contact><work_phone>650-555-1212</work_phone>

</contact></contacts>

Updating the phone number is very symmetrical with PATCH+Ranges:

The nice thing about this is that no new MIME types need to beinvented; the Content-Range header alerts the server that the stuffyou're sending is just a fragment; intermediaries will eitherunderstand this or fail cleanly; and the retrievalsand updates are symmetrical.PATCH /abook.xmlContent-Range: xpath=/contacts/contact[name="Joe"]/work_phone<contacts><contact><work_phone>408-555-1591</work_phone>

</contact></contacts>

[1]http://www.snellspace.com/wp/?p=683

[2]http://www.25hoursaday.com/weblog/2007/06/09/WhyGDataAPPFailsAsAGeneralPurposeEditingProtocolForTheWeb.aspx

[3]http://www.dehora.net/journal/2007/06/app_on_the_web_has_failed_miserably_utterly_and_completely.html

[4]http://tech.groups.yahoo.com/group/rest-discuss/message/8412

[5]http://tech.groups.yahoo.com/group/rest-discuss/message/9118

[6]http://www.ietf.org/internet-drafts/draft-dusseault-http-patch-08.txt

Some thoughts on "Some Thoughts on Open Social Networks"

"Content Hosted on the Site Not Viewable By the General Public and not Indexed by Search Engines: As a user of Facebook, I consider this a feature not a bug."

Dare goes on to make some great points about situations where he's needed to put some access controls in place for some content. I could equally make some points about situations where exposing certain content as globally as possible has opened up new opportunities and been a very positive thing for me. After which, I think we'd both agree that it's important to be able to put users in control.

Dare: "Inability to Export My Content from the Social Network: This is something that geeks complain about ... danah boyd has pointed out in her research that many young users of social networking sites consider their profiles to be ephemeral ... For working professionals, things are a little different since they mayhave created content that has value outside the service (e.g.work-related blog postings related to their field of endeavor) soallowing data export in that context actually does serve a legitimateuser need."

It isn't just a data export problem, it's a reputation preservation problem too. Basically, as soon as you want to keep your reputation (identity), you want to be able to keep your history. It's not a problem for most younger users since they're experimenting with identities anyway. Funny thing, though: Younger users tend to get older. At some point in the not so distant future that legitimate user need is going to be a majority user need.

Dare: "It is clear that a well-thought out API strategy that drives people toyour site while not restricting your users combined with a great userexperience on your website is a winning combination. Unfortunately,it's easier said than done."

+1. Total agreement.

Dare: "Being able to Interact with People from Different Social Networks from Your Preferred Social Network: I'm on Facebook and my fiancée is on MySpace. Wouldn't it be great if we could friend each other and send private messages without both being on the same service? It is likely that there is a lot of unvoiced demand for thisfunctionality but it likely won't happen anytime soon for businessreasons..."

Will there be a viable business model in meeting the demand that Dare identifies, one which is strong enough to disrupt business models dependent on a walled garden? IM is certainly a cautionary tale, but there are some key differences between IM silos and social networking sites. One is that social networking sites are of the Web in a way that IM is not -- specifically they thrive in a cross-dependent ecosystem of widgets, apps, snippets, feeds, and links. It's possible that "cooptition" will be more prevalent than pure competition. And it's quite possible for a social network to do violently antisocial things and drive people away as Friendster did, or simply have a hot competitor steal people away as Facebook is doing. Facebook's very success argues against the idea that there will be a stable detente among competing social network systems.

Relationship requires identity

Let's face it, relationship silos are really justextensions of identity silos. The problem of having to create andre-create my relationships as I go from site to site mirrors my problemof having to create and re-create my identity as I go from site tosite. The Facebook Platform might have one of the better IdentityProvider APIs , but all the applications built on it still have to staywithin Facebook itself.Yup. Which is the primary reason that I've been interested in identity-- it's a fundamental building block for social interactions of allkinds. And think of what could happen if you could use theInternet as your social network as easily as you can use Facebooktoday. As ScottGilbertson at Wired discovered, it's nothard to replicate most of the functionality; it's the people whoare "on" Facebook which makes it compelling.

2007/08/06

2007/08/02

cat Google Spreadsheets | Venus > my.feed

Note that this requires the data to be publicly readable on the Spreadsheets side, which is fine for this use. A lot more uses would be enabled with a lingua franca for deputizing services to talk securely to each other.

2007/07/27

Blogger is looking for engineers!

2007/07/24

AtomPub now a Proposed Standard

Share your dog's name, lose your identity?

Credit information group Equifax said members of sites such as MySpace, Bebo and Facebook may be putting too many details about themselves online.It said fraudsters could use these details to steal someone's identity and apply for credit and benefits.So, to protect the credit bureau's business models, we're all supposed to try to hide every mundane details of our lives? The name of my dog is not a secret; if credit bureaus assume it is, they are making a mistake.

Here's the solution: Make the credit bureaus fiscally responsible for identity theft, with penalties for failing to use good security practices.

2007/07/19

Open Authorization, Permissions, and Socially Enabled Security

Consider a user navigating web services and granting various levels of permissions to mash-ups; a mash-up might request the right to read someone's location and write to their Twitter stream, for example. The first time this happens, the user would be asked something like this:

The TwiLoc service is asking to do the following on an ongoing basis:

- Read your current location from AIM, and

- Create messages on your behalf in Twitter.

How does this sound?

[ ] No [ ] Yes [ ] Yes, but only for today

The user would also have a way to see what permissions they've granted, how often they've been used (ideally), and be able to revoke them at any time.

Now, of course, users will just click through and say "Yes" most of the time on these. But there's a twist; since you're essentially mapping out a graph of web services, requested operations, granted permissions, usage, and revocations, you start to build up a fairly detailed picture of what services are out there and what precisely they're doing. You also find out what services people trust. Throw out the people who always click "yes" to everything, and you could even start to get some useful data.

You can also combine with social networks. What if you could say, "by default, trust whatever my buddy Pete trusts"? Or, "trust the consensus of my set of friends; only ask me if there's disagreement"? Or more prosaically, "trust what my local IT department says".

2007/07/18

At Mashup Camp today and tomorrow

Every mashup attempts to expand...

Every mashup attempts to expand until it can do social networking. Those that can't are replaced by those that can.

(With apologies to Zamie Zawinski.)

2007/07/10

Implications of OpenID, and how it can help with phishing

So the implication is that the security policies that you currently have around "forgot your password" are a good starting point for thinking about OpenID security. Specifically phishing vulnerabilities and mitigations are likely to be similar. However, OpenID also changes the ecosystem by introducing a standard that other solutions can build on (such as Verisign's Seat Belt plugin).

OpenID really solves only one small problem -- proving that you own a URL. But by solving this problem in a standard, simple, deployable way, it provides a foundation for other solutions.

It doesn't solve the phishing problem. Some argue that it makes it worse by training users to follow links or forms from untrusted web sites to the form where they enter a password. My take: Relying on user education alone is not a solution. If you can reduce the number of places where a user actually needs to authenticate to something manageable, like say half a dozen per person, then we can leverage technical and social aids much more effectively than we do now. In this sense, OpenID offers opportunities as well as dangers. Of course, this would be true of any phishing solution.

2007/07/09

Disorder, Delamination, David Weinberger

Anyway. David's now posted a new essay well worth reading, Delamination Now!. Also, well worth acting on. Money quote: "[T]he carriers are playing us like a violin."

2007/07/08

There she blows! (The Moby Dick Theory of Big Companies)

The Pmarca Guide to Startups, part 5: The Moby Dick theory of big companies

[1] In the same whale as pmarca in fact, though in a somewhat different location along the alimentary tract.

2007/07/07

35 views of social networking

35 Perspectives on Online Social Networking: One fewer view than Hokusai's Mt. Fuji series. Just as much diversity.

35 Perspectives on Online Social Networking: One fewer view than Hokusai's Mt. Fuji series. Just as much diversity.2007/07/06

A FULL INTERACTIVE SHELL!

IT GIVES YOU A FULL INTERACTIVE SHELLNice.

I REPEAT, A FULL INTERACTIVE SHELL

2007/07/05

Fireworks, Social Compacts, and Emergent Order

Then, we left (quickly, to avoid the crowds) and immediately got snarled in traffic. Of course everyone was leaving at the same time so we expected it to be slow, but we were literally not moving for a quarter of an hour. After a while we figured out that we couldn't move because other cars kept joining the queue ahead of us from other parking lots. Around this time, other people started figuring this out too and started going through those same parking lots to jump ahead. This solution to the prisoner's dilemma took about 30 minutes to really begin to cascade: Everyone else began to drive through parking lots, under police tape, on the wrong side of the road, cutting ahead wherever they could to avoid being the sucker stuck at the end of the never-moving priority queue. (Full disclosure: I drove across a parking lot to get over to the main road where traffic was moving, but violated no traffic laws.)

I wonder how the results would have been different if the people involved could communicate efficiently instead of being trapped incommunicado in their cars. I bet every single car had at least one cell phone in it, many with GPS. Imagine an ad hoc network based on cell phones and GPS, communicating about traffic flow -- nothing more complicated than speed plus location and direction, and maybe a "don't head this way" alert. It'd be interesting to try.

2007/07/01

Theory P or Theory D?

Theory P adherents believe that there are lies, damned lies, and software development estimates. ... Theory P adherents believe that the most important element of successful software development is learning.

Maybe I'm an extreme P adherent; I say that learning is everythingin software development. The results of this learning are captured incode where possible, human minds where not. Absolutely everything elseassociated with software development can and will be automated away.

Finally:

To date, Theory P is the clear winner on the evidence, and it’s noteven close. Like any reasonable theory, it explains what we haveobserved to date and makes predictions that are tested empiricallyevery day.

Theory D, on the other hand, is the overwhelming winner in themarketplace, and again it’s not even close. The vast majority ofsoftware development projects are managed according to Theory D, withlarge, heavyweight investments in design and planning in advance, verylittle tolerance for deviation from the plan, and a belief that goodplanning can make up for poor execution by contributors.

Does Theory D reflect reality? From the perspective of effectivesoftware development, I do not believe so. However, from theperspective of organizational culture, theory D is reality, and youignore it at your peril.

So this is a clear contradiction. Why is it that theory D is sosuccessful (at replicating itself if nothing else) while theory Planguishes (at replicating)? Perhaps D offers clear benefits to itsadherents within large organizations -- status, power, large reportingtrees... and thus P can't gain a foothold despite offering clearorganization-level benefits.

But I suspect that it's simpler than that; I think that people simplydon't really evaluate history or data objectively. Also, it may bedifficult for people without the technical background to really howdifficult some problems are; past a certain level of functionality,it's all equally magic. The size of the team that accomplished a taskthen becomes a proxy for its level of difficulty, in the way that highprices become a proxy for the quality of a product in the marketplacefor the majority of consumers. So small teams, by this measure, mustnot be accomplishing much, and if they do, it's a fluke that can beexplained away in hindsight with a bit of work.

Somebody should do a dissertation on this...

Theory P or theory D?

Theory P adherents believe that there are lies, damned lies, andsoftware development estimates. ... Theory P adherents believethat the most important element of successful software development is learning.

Maybe I'm an extreme P adherent; I say that learning is everythingin software development. The results of this learning are captured incode where possible, human minds where not. Absolutely everything elseassociated with software development can and will be automated away.

Finally:

To date, Theory P is the clear winner on the evidence, and it’s noteven close. Like any reasonable theory, it explains what we haveobserved to date and makes predictions that are tested empiricallyevery day.

Theory D, on the other hand, is the overwhelming winner in themarketplace, and again it’s not even close. The vast majority ofsoftware development projects are managed according to Theory D, withlarge, heavyweight investments in design and planning in advance, verylittle tolerance for deviation from the plan, and a belief that goodplanning can make up for poor execution by contributors.

Does Theory D reflect reality? From the perspective of effectivesoftware development, I do not believe so. However, from theperspective of organizational culture, theory D is reality, and youignore it at your peril.

So this is a clear contradiction. Why is it that theory D is sosuccessful (at replicating itself if nothing else) while theory Planguishes (at replicating)? Perhaps D offers clear benefits to itsadherents within large organizations -- status, power, large reportingtrees... and thus P can't gain a foothold despite offering clearorganization-level benefits.

But I suspect that it's simpler than that; I think that people simplydon't really evaluate history or data objectively. Also, it may bedifficult for people without the technical background to really howdifficult some problems are; past a certain level of functionality,it's all equally magic. The size of the team that accomplished a taskthen becomes a proxy for its level of difficulty, in the way that highprices become a proxy for the quality of a product in the marketplacefor the majority of consumers. So small teams, by this measure, mustnot be accomplishing much, and if they do, it's a fluke that can beexplained away in hindsight with a bit of work.

Somebody should do a dissertation on this...

2007/06/28

Does social software have fangs? And, can it organize itself?

Suw reported that corporate users tend to impose their existingcorporate hierarchy on the flat namespace of their Wikis, which is finebut may not be exploiting the medium to its full potential. And Wikisearch tends to be at best mediocre. Has anyone looked at leveraginguser edit histories to infer page clusters? I could imagine anautogenerated Wiki page which represented a suggested cluster, with away for people to edit the page and add meaningful titles andannotations to help with search, which could serve as an alternativeindex to at least part of a site.

2007/06/22

Identity Panel at Supernova, or How I Learned to Stop Worrying and Love User Centric Identity

(John Clippinger, Kaliya Hamlin, Reid Hoffman, Marcien Jenckes, Jyri Engestrom)

As our lives increasingly straddle the physical and the virtualworlds, the management of identity becomes increasingly crucial fromboth a business and a social standpoint. The future of e-commerce anddigital life will require identity mechanisms that are scalable,secure, widely-adopted, user-empowering, and at least as richlytextured as their offline equivalents. This session will examine howonline identity can foster relationships and deeper value creation.

OpenID is definitely very simple, very focused on doing just one part of identity. It enables the unbundling of identity, authentication, and services. It lets you say "this X is the same as this other X from last week, or from this other site" in a verifiable way that's under the control of X. Is there a better word for this than "identity"?

Also, every discussion of OpenID should start out with a simple demo: I type =john.panzer at any web site, and it lets me in. Then talk about the underpinnings and the complications after the demo.

Tags: supernova2007, identity, OpenID

2007/06/20

Poll: Best Atom Publishing Protocol abbreviation?

Will Copyright Kill Social Media? (Supernova)

Everyone agreed that copyright won't kill social media, though it will shape it (that which does not kill you makes you stronger?) Unfortunately we ran out of time before I was able to ask the following, so I'll just blog them instead.

(Moderator Denise Howell, Ron Dreben, Fred von Lohmann, Mary Hodder, Mark Morril, Zahavah Levine)

The promise of social networks, video sharing, and online communitiesgoes hand-in-hand with the challenge of unauthorized use. Is socialmedia thriving through misappropriation of the creativity of others? Or are the responses to that concern actually the greater problem?

-- Will Copyright Kill Social Media?

Mark Morrill was very reasonable for the most part, but made two outrageous claims: That DRM is pro-consumer, and that we should be able to filter on upload for copyright violations. The first claim is I think simply ridiculous, especially when the architect of the DMCA says that the DRM provisions have failed to achieve their effect and consumers are rejecting DRM wherever they have a choice. You can say it's needed for some business model, or required to make profit, but I don't see how you can say it's pro-consumer with a straight face.

On filtering, Zahavah Levine pointed out that copyright isn't like porn; there's nothing in the content itself that lets you know who the uploader really is and whether they own any necessary rights. But even if you had this, it seems to me that you'd need an artificial lawyer to have a scalable solution. (GLawyer?)

On the technical side, I heard one thing that isn't surprising: That it would be very helpful to have a way for rights holders to be able to assert their rights in a verifiable way. An opt-in copyright registration scheme that provided verifiability might be a step forward here. Alternatively, perhaps a distributed scheme based on verifiable identities and compulsory licenses might be worth looking at.

2007/06/19

Going Supernova

2007/06/18

In Which I Refute Web3S's Critiques Thusly

Hierarchy

Turtles all the way down:

<entry>

...

<content type="application/atom+xml">

<feed> ... it's turtles all the way down! ... </feed>

</content>

</entry>

Merging

I think this is orthogonal, but there's already a proposed extension to APP: Partial Updates. Which uses (revives?) PATCH rather than inventing a new verb or overloading PUT on the same resource. I'm neutral on the PATCH vs. POST or PUT thing, except to note that it's useful to be able to 'reset' a resource's state, so having the ability to allow this via either two verbs or two URIs is useful too. I'm a little confused though since Yaron says that they're using PUT for merging but they're also defining UPDATE as a general purpose atomic delta -- so why do you need to overload PUT?

I need to think about the implications of extending the semantics of ETags to cover children of containers as well as the container.

I do like Web3S's ability to address sub-elements individually via URIs; APP provides this out of the box for feeds and entries, but not for fields within an entry. It's not difficult to imagine an extension for doing so that would fit seamlessly within APP though.

I think it'd be interesting to look at an APP+3S (APP plus 2-3 extensions) to see how it would compare against Web3S, and whether the advantages of a stable, standard base do or do not outweigh the disadvantages of starting from something not tailored for your solution. Certainly the issues raised by Yaron are fairly generic and do need solutions; they're not new; and the thinking of the APP WG has pretty much been that these sorts of things are best dealt with via extensions.

2007/06/16

Social Network Partition

Tags: social networks, partition, lions

2007/06/14

Is the Atom Publishing Protocol the Answer?

Dare Obasanjo raised a few hackles with a provocative post (Why GData/APP Fails as a General Purpose Editing Protocol for the Web). In a followup (GData isn't a Best Practice Implementation of the Atom Publishing Protocol) he notes that GData != APP. DeWitt Clinton of Google follows up with a refinement of this equation to GData > APP_t where t < now in On APP and GData.

I hope this clarifies things for everybody.

There seems to be a complaint that outside of the tiny corner of the Web comprised of web pages, news stories, articles, blog posts, comments, lists of links, podcasts, online photo albums, video albums, directory listings, search results, ... Atom doesn't match some data models. This boils down to two issues, the need to include things you don't need, and the inability of the Atom format to allow physical embedding of hierarchical data.

An atom:entry minimally needs an atom:id, either an atom:link or atom:content, atom:title, and atom:updated. Also, if it's standalone, it needs an atom:author. Let's say we did in fact want to embed hierarchical content and we don't really care about title or author as the data is automatically generated. I might then propose this:

<entry>

<id>tag:a unique key</id>

<title/>

<author><name>MegaCorp LLC</name></author>

<updated>timestamp of last DB change</updated>

<content type="application/atom+xml">

<feed> ... it's turtles all the way down! ... </feed>

</content>

</entry>

Requestors could specify the desired inline hierarchy depth desired. Subtrees below that node can still be linked to via content@src. And when you get to your leaf nodes, just use whatever content type you desire.

Alternatively, if you need a completely general graph structure, there's always RDF. Which can also be enclosed inside atom:content.

The above is about as minimal as I can think of. It does require a unique ID, so if you can't generate that you're out of luck. I think unique IDs are kind of general. It also requires an author, which can be awfully useful in tracking down copyright and provenance issues. So that's pretty generic too, and small in any case.

Of course this type of content would be fairly useless in a feed reader, but it would get carried through things like proxies, aggregators, libraries, etc. And if you also wanted to be feedreader friendly for some reason, the marginal cost of annotating with title and summary is minimal.

2007/06/01

Google += Feedburner

The local weather forecast calls for general euphoria with intermittent periods of off-the-rails delight.

2007/05/26

2007/05/18

Goodbye AOL; Hello Google!

I don't know yet if I'll continue using this blog; but regardless, http://abstractioneer.org will always resolve to where I'm blogging (anything can be solved with one level of indirection). And =john.panzer will always reach me.

2007/05/16

New Journals features: Video, pictures, mobile... and Atom!

We've also made some changes to our Atom API to bring it more into line with the draft APP standard; it's not 100% there yet but it's close and certainly usable.

2007/05/15

At iiw2007a: Concordia (Eve Maler)

Eve draws up a diagram showing how 'bootstrapping' works in SAML/Liberty WS. Discussion ensues with many queries about Condordia. More questions than answers, but I think that people have a lot of related/interlocking problems that need solving.

Starting from OpenID, it sounds to me like all these cases are a subset of the "access a service on behalf of a user" use case; hopefully solving either one will help with the other.2007/05/14

At IIW2007

So far so good; this curl command posts a blog entry on my Atom blog service:

curl -k -v -sS --include --location-trusted --request POST --url'https://monotreme:4279/_atom/collection/blogssmoketester' --data@/tmp/ieRN0zhgh6 --header 'Content-Type: application/atom+xml;charset=utf-8' --header 'Authorization: OpenAuthtoken="%2FwEAAAAABYgbMtk4J7Zwqd8WHKjNF6fgJSYe4RhTuitkNyip%2BEru%2FY43vaGyE2fTlxKPAEkBC%2Bf5lhWg18CE2gaQtTVQy0rpillqtUVOOtrf1%2BLzE%2BNTcBuFJuLssU%2B6sc0%3D"devid="co1dDRMvlgZJXvWK"'

Note that the token, which gives authorization and authentication, is obtained with a separate login call to an internal OpenAuth server. It looks like I need both the token and the devid; the devid essentially identifies the agent representing the user.

I should be able to post this curl command line with impunity because it shouldn't expose any private data, unlike the HTTP Basic Auth equivalent which exposes the user's password in nearly clear text. This also implies that it should be possible to avoid using TLS.

Now, if I had a standard way to get a token for an OpenID session, I could pass that in like so:

Authorization: OpenID token="...."

And my server will advertise that it accepts all three -- Basic, OpenAuth, and OpenID. I hope to talk with like minded people about this at IIW.

2007/05/07

Sun += OpenID

2007/05/04

AOL OpenAuth and Atom Publishing Protocol

So, here are my thoughts. Let's say the client has a token stringwhich encapsulates authentication and authorization. They need to sendthis along with an Atom Publishing Protocol (APP) request.

Windows Live and GData both implement custom RFC 2617 WWW-Authenticate:headerschemes. Unfortunately they don't follow exactly the same pattern,or I'd just copy it. But using RFC 2617 is clearly the right approachif the server can support it. So here's a proposal:

If a client has an OpenAuth token, it sends an Authorization: header. The format looks like this:

Authorization: OpenAuth token="..."where ... contains the base64-encoded token data (an opaque string,essentially).

When there is a problem, or the Authorization: header is missing, a401 response is returned with a WWW-Authenticate: header.

401 Need user consentwhere the status code contains a human readable message, and theWWW-Authenticate header contains the precise fault code -- NeedToken,NeedConsent, ExpiredToken. If present, the urlparameter gives the URL of an HTML page which can be presented to theend user to login or grant permissions. For example it can point to alogin page if the fault is NeedToken. A client would then need to dothe following in response:

...

WWW-Authenticate: OpenAuth realm="RealmString",fault="NeedConsent",url="http://my.screenname.aol.com/blah?a=boof&b=zed&...."

- Append a "&succUrl=..." parameter to the url, telling theOpenAuth service where to go when the user successfully logs in orgrants permission.

- Open a web browser or browser widget control with the givencomposed URL, and present to the user.

- Be prepared to handle an invocation of the succUrl with anappended token parameter, giving a new token to use for subsequentrequests.

As a wrinkle, to enhance autodiscovery, perhaps we should allow an"Authorization: OpenAuth" header with no token on any request(including HEAD). The server could then respond with a 401 andfault="NeedToken", telling the client what URL to use for loginauthentication. The interesting thing about this is that the protocolis then truly not tied to any particular authentication service --anyone who implements this fairly simple protocol with opaque tokenscan then play with anyone else. The whole thing could be built on topof OpenID, for example.

Perhaps this doesn't quite work. I notice that the Flickr APIs don'tdo this, and instead have non-browsers establish a session with aclient-generated unique key ("frob"). But this requires that users endup looking at a browser screen that tells them to return to theirapplication to re-try the request. Which the above scheme couldcertainly do as well, by making the succUrl point at such a page. Sois there a reason why Flickr doesn't redirect? There's likely a gapingsecurity hole here that I'm just missing.

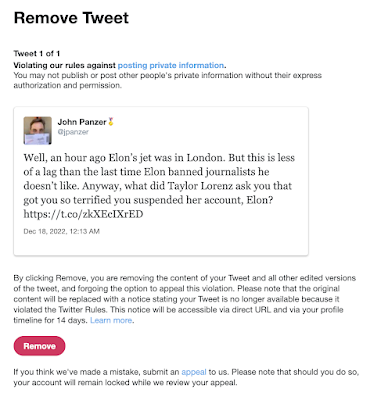

Suspended by the Baby Boss at Twitter

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Well! I'm now suspended from Twitter for stating that Elon's jet was in London recently. (It was flying in the air to Qatar at the...

-

Update 6/2/2023: I was right . These are my observations for our local conditions (Santa Clara County, July 10-12, 2020), which to summarize...

-

Just a few things to bear in mind when considering what counts as "high crimes and misdemeanors". Read this list, and, however va...